Latest posts by smcameron

Aug 18, 2014

by smcameron

The August 2014 meeting of the Houston Recreational Computer Programming Group was held this past Sunday, on the 10th. Pretty good attendance this time, as has now become rather usual. Last month, I failed to provide a writeup for the July meeting, so that is included here too, below.

read more >

Jun 11, 2014

by smcameron

Another meeting of the Houston Recreational Computer Programming Group was held this past Sunday, June 8th, 2014. We had a great turnout this time, if we get many more people showing up, we'll be needing to find a bigger room.

read more >

Apr 13, 2014

by smcameron

The 16th meeting of the Houston Recreational Computer Programming Group was held Sunday, April 13th, 2014. Pretty good turnout and lots of interesting things were presented, including a Little Man Computer emulator, fake computational fluid dynamics, and a cool 2.5D space game reminiscent of the vintage video game "Star Control".

read more >

Feb 10, 2014

by smcameron

February 9, 2014 -- Today the 14th meeting of the Houston Recreational Computer Programming group was held at TX/RX Labs. I'd like to thank everyone who showed up. We had a great turnout with lots of new people this time and I hope we can continue growing and seeing more ...

read more >

Dec 4, 2013

by smcameron

rtavk3 writes: Howdy, its time for your monthly what's happening at the lab! Things are a little slow these days with the Holidays in effect. Hope everyone is having a great time and will be back in full force in the new year. Anyway there is still a lot going ...

read more >

Nov 21, 2013

by smcameron

The eleventh meeting of the Houston Recreational Computer Programming Group was held Sunday, November 10th, 2013. Stephen Cameron and Jeremy Van Grinsven talked about how they used quaternions in the game Space Nerds in Space to represent the orientation of objects within the game as well as rotations and incrementally ...

read more >

Oct 14, 2013

by smcameron

The tenth meeting of the Houston Recreational Computer Programming Group met this past Sunday, October 13th, at TX/RX Labs to bask in the LCD glow of computer monitors and share the latest goings on in local recreational computer programming. First up, Frank Davies showed off his musical contraption, a 3D-printed ...

read more >

Sep 9, 2013

by smcameron

rtavk3 writes: Howdy, September starts and so it's time for another lab update to keep you abreast of the happenings around the lab. CLASSES!! Are posted at http://classes.txrxlabs.org. Let everyone you know about it and spread the word. Also make sure to sign up soon as some of our popular ...

read more >

Sep 9, 2013

by smcameron

This past Sunday, September 8th, 2013, the 9th meeting of the Houston Recreational Computer Programming Group was held at TX/RX Labs. Patrick Wheeler discussed stream processing with pull based acyclic graphs using the Pipes package in Haskell. There was some discussion about how this way of doing things might be ...

read more >

Aug 17, 2013

by smcameron

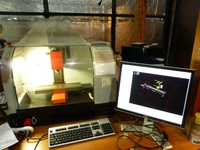

Mike Gilsdorf has lent the lab a mini mill, a perfect companion to Jeremy's mini lathe in the light fabrication area. According to Mike: It's a 3 axis Emco PC Mill 55. Made in Austria. Somewhere near Salzberg if I'm not mistaken. Right now the only modifications are to the ...

read more >

Aug 13, 2013

by smcameron

This past Sunday, August 11th, 2013, the 8th meeting of the Recreational Computer Programming Group convened to discuss the latest things going on in the local Recreational Programming scene. Things were a little light this month, with only your moderator, yours truly, giving an informal presentation. Chris Cauley casually mentioned ...

read more >

Aug 12, 2013

by smcameron

Here's an update from rtavk3 about all the cool stuff happening around TX/RX Labs lately: Summer is almost over but even with the heat lots of cool stuff has been happening down at the lab. Here is an update for those of you who missed out due to vacations, etc.! ...

read more >

Jul 18, 2013

by smcameron

This past Sunday, July 14th, 2013, the 7th meeting of the Recreational Computer Programming Group convened to discuss the latest goings on in local Recreational Computer Programming activities. Patrick Wheeler presented a prototype reactive[1] web gui framework built in Haskell with the pipes-concurrency[2] package and complied to javascript with ghcjs[3][4], ...

read more >

Jul 1, 2013

by smcameron

This past Friday, June 28, 2013, Space Nerds In Space, a multi-player cooperative networked starship simulator game by member Steve Cameron (that's me) was unleashed on unsuspecting fellow hackers and we all had lots of fun cooperatively blowing aliens out of the interstellar sky. Initially, we got off to a ...

read more >

Jun 9, 2013

by smcameron

The 6th meeting of the Recreational Computer Programming Group was held on June 9th, 2013 at Houston's hackerspace, TX/RX Labs. Chris Ertel presented an interesting web app from the Multi Robot Systems Lab at Rice University which allows you to control a simulated swarm of robots to perform various tasks. ...

read more >

Mar 10, 2013

by smcameron

The third meeting of the Recreational computer group took place today, March 10, 2013, and was well attended. Edwin G. presented some slides about the ant colony optimization algorithm which has applications in scheduling, routing and other areas. He also presented some information about the Maven build system used by ...

read more >

Mar 2, 2013

by smcameron

A couple weeks ago TX/RX member Jeremy G. picked up a broken CNC mini-lathe at a local pawn shop for fifty bucks and brought it in. Within a few hours, he and other members Chris K., Roland K, and Greg S. had it up and running under the control of ...

read more >

Feb 16, 2013

by smcameron

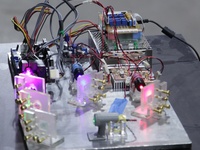

If you missed the open house this past Friday night (02/15/2013) at TX/RX labs, you missed a good show. Jeremy Van Grinsven brought in the RGB laser projector he's been working on over the last month. The projector contains three lasers, red, blue and green. The beams are conjoined by ...

read more >

Feb 11, 2013

by smcameron

This past Sunday, Feb. 10th, the 2nd meeting of the Recreational Computer Programming Group was held. Chris Ertel showed us some cool effects dynamically applied to webcam output via OpenGL shaders, and some interesting procedurally generated maps for some sort of zombie infection game. Marlin Mixon demonstrated an Android app ...

read more >

Oct 6, 2012

by smcameron

Over the course of a week or so, TX/RX labs member Jeremy Van Grinsven built this amazing Arduino driven edge-lit laser-etched acrylic zoetrope showing an animation derived from another TX/RX member’s video game, Word War vi [full disclosure, that other member is me. -- Ed.] Here it is on YouTube ...

read more >

Sep 9, 2012

by smcameron

Always wanted to build a go-kart but don’t know how to weld? Or maybe you would like to make an iPhone game, but don’t know how to program? Or perhaps you have ideas for some cool electronics projects, but don’t really know how to get started? More likely, you have ...

read more >

Aug 10, 2012

by smcameron

3D Art Houston is a relatively new artists’ group focused on three-dimensional art forms. 3D Art Houston members will be meeting at TX/RX Labs on Wednesday, August 15th at 6 p.m. to gain a better understanding of the resources and talents there. Members work in clay, iron, glass, wood, textiles, ...

read more >

Stay connected!

Sign up for our monthly newsletter for updates on events, workshops, studio tips + tricks, member spotlights and more.

Sign Me Up!About TXRX Labs

Established in 2008, TXRX Labs is a non-profit hackerspace for the greater Houston area. Housed in the East End District, we offer courses in and access to our rapid prototyping lab, woodshop, machineshop, electronics lab, and a wide variety of other tools. Our goal is to educate the public about technology and show how seemingly complex techniques can be used by anyone. If you like what we do, please donate.

This Week at TXRX Labs

- 04/20 - Brazing I : Oxy/ Acetylene 101: Heating, Cutting, Brazing

- 04/22 - Office Hours

- 04/22 - Welding I: MIG

- 04/22 - Ceramics Boot Camp

- 04/23 - Ion Prototyping Lab Office Hours

- 04/23 - Woodworking I: Woodshop Tools

- 04/23 - Jewelry I: Silver Chain Bracelet

- 04/23 - Laser Cutting I

- 04/24 - Office Hours

- 04/24 - Woodworking I: Woodshop Tools

- 04/24 - Jewelry I: Silver Chain Bracelet

- 04/25 - CNC Router Using VCarve

- 04/26 - Ion Prototyping Lab Office Hours